The AI Innovator’s Dilemma in Marketing

Why large enterprises struggle to use AI’s full potential. Five structural barriers, and a research-backed playbook for marketing leaders who want to break through.

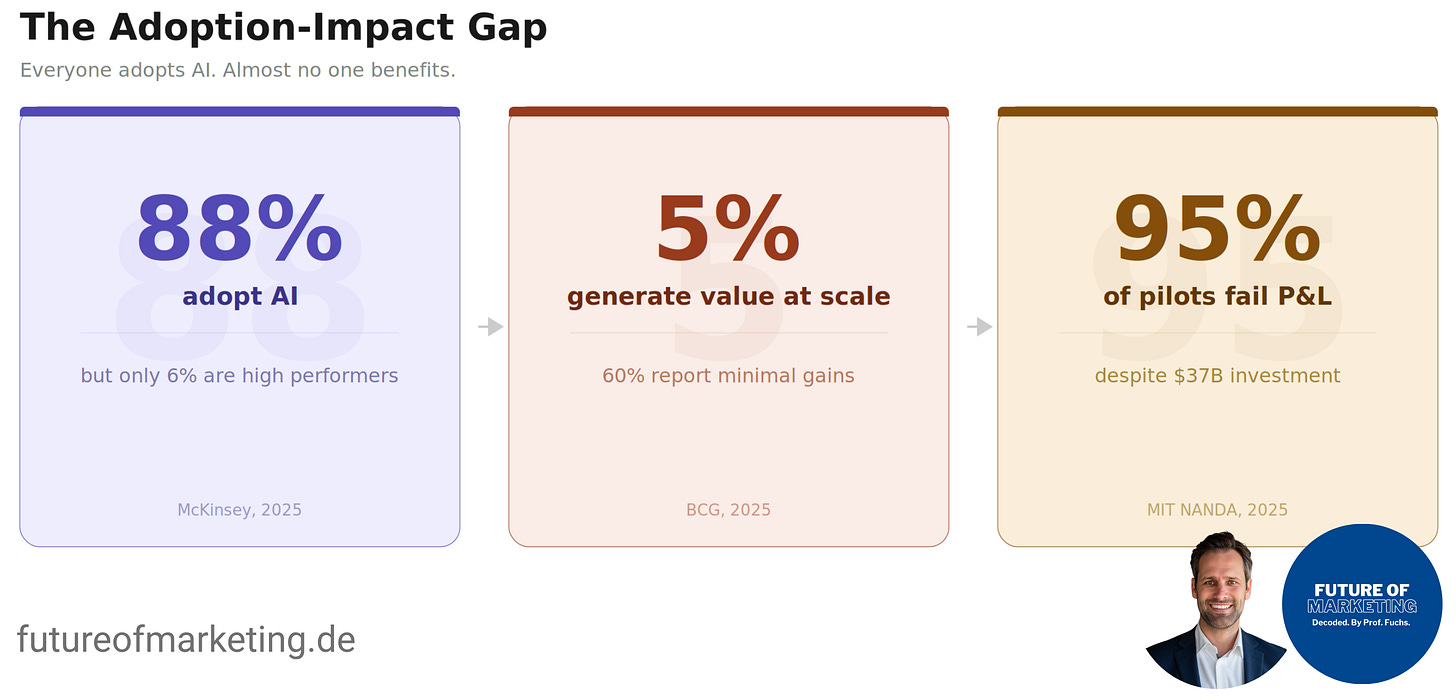

Why read this article: It explains why 88% of enterprises adopt AI but only 5-6% generate real business value from it, and why the root cause is organizational, not technological. The article applies Clayton Christensen’s Innovator’s Dilemma framework to the current AI adoption crisis in marketing, identifies five structural barriers that reinforce each other, and provides a research-backed playbook for marketing leaders who want to escape the trap before the gap to AI-native competitors becomes irreversible.

Over the past weeks, I've written a lot about what AI can do for marketing. The Claude for Marketing Mini Series or practical guides. And every single time I talk to someone working inside a large organization about these topics, I hear the same thing: "That's great, but at our company, none of this works."

A friend of mine works at Mercedes-Benz. His company rolled out Microsoft Copilot as their official AI tool (like most large enterprises with Microsoft 365, it was the path of least resistance). Deployed, available, supported by IT.

He says it’s useless.

Not in a hyperbolic way. In a very literal way. The tool sits on top of the organization like a disconnected shell:

It can’t access internal tools or databases.

It can’t read strategy documents.

It doesn’t know what projects are running.

It has no idea what the company decided last quarter.

Now here’s the part that should worry every marketing leader reading this.

If a five-person startup decided today to deploy AI across their entire operation, connect it to every system and data source they have, and start working with it as an integrated part of their team, they could realistically do it by tomorrow afternoon.

Mercedes can’t do that. Neither can BMW, Siemens, Unilever, or most Fortune 500 companies. Not because they lack budget (enterprises spent $37 billion on generative AI in 2025 alone). Not because they lack talent. And not because the technology isn’t ready.

They can’t do it because their own organizations won’t let them.

That’s the trap. And Clayton Christensen diagnosed it almost 30 years ago.

The Framework: Christensen’s Innovator’s Dilemma

In 1997, Harvard professor Clayton M. Christensen published The Innovator’s Dilemma (1997). His core argument remains one of the most powerful ideas in strategy: good management itself causes failure when companies face disruptive technologies. His RPV framework explains why: Resources (people, capital, tools) are flexible, you can redirect them. But processes and values (how decisions get made, what gets prioritized) become embedded in organizational culture. They resist change even when the people inside the organization want to change. The very practices that drive success in established markets are the ones that reject disruptive technologies. AI in the enterprise is following this pattern with remarkable precision.

Henderson and Clark (1990) added a critical dimension: firms fail not because they can’t build or buy new components (the AI tools exist), but because they can’t reconfigure the relationships between components. AI changes how data, creative, targeting, measurement, and optimization relate to each other. That makes it deceptively dangerous for incumbents, because it looks incremental on the surface.

The Paradox: Everyone Adopts AI, Almost No One Benefits

The data on enterprise AI adoption tells a paradox that Christensen would immediately recognize.

88% of organizations now use AI in at least one business function, and Gen AI adoption has reached 72%. But only 39% report any EBIT impact at the enterprise level, and most of those report less than 5%. Only about 6% qualify as “AI high performers” (McKinsey, 2025).

The picture gets worse the closer you look. While 75% of executives rank AI among their top three priorities, only 5% of companies achieve AI value at scale (what BCG calls “future-built”). A full 60% are laggards reporting minimal gains despite substantial investment. Less than 30% of CEOs were satisfied with their AI returns (BCG, 2025).

Generative AI has entered the Trough of Disillusionment on Gartner’s 2025 Hype Cycle, with over 30% of GenAI projects abandoned after proof of concept. And across the enterprise landscape, 95% of GenAI pilots fail to deliver measurable P&L impact, despite $30-40 billion in total investment (MIT NANDA, 2025).

Marketing sits at the epicenter of this paradox. It has been the #1 function for AI adoption for eight consecutive years (McKinsey, 2025). More than half of all enterprise GenAI budgets flow into marketing and sales tools (Menlo Ventures, 2025). Yet most of that spending produces no measurable financial return.

👉 Adoption was never the issue. What’s missing is impact.

Why Enterprises Can’t Use AI: Five Barriers That Reinforce Each Other

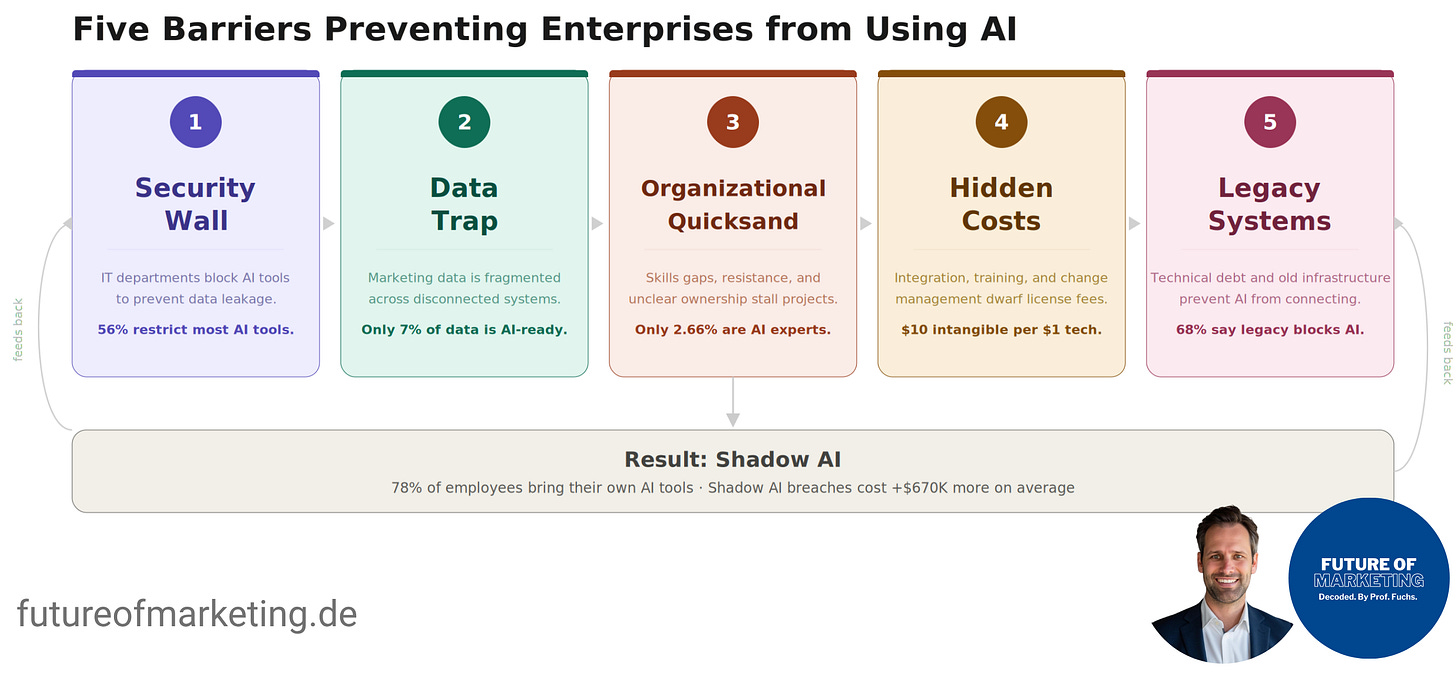

The barriers preventing large enterprises from using AI’s full potential in marketing aren’t independent obstacles. They form a self-reinforcing system where each barrier makes the others harder to overcome.

Barrier 1: The Security Wall

The single largest barrier is fear of data leakage. And the fear is well-founded.

34.8% of corporate data entered into AI tools is sensitive, up from 10.7% two years earlier (Cyberhaven, 2025). GenAI now accounts for 32% of all corporate data exfiltration, making it the #1 vector for data leaving enterprise controls (LayerX, 2025).

The Samsung incident crystallized these fears into policy. In March 2023, Samsung’s semiconductor division allowed employees to use ChatGPT. Within 20 days, three separate leaks occurred: an engineer pasted proprietary source code, another submitted equipment test sequences, and a third uploaded meeting transcripts. Samsung banned all generative AI tools company-wide. JPMorgan Chase, Goldman Sachs, Apple, Amazon, and Verizon followed within months.

Today, 56% of enterprises block most AI tools while maintaining narrow allowlists. Enterprise procurement cycles stretch 3-12 months for each new tool. By the time something clears security audits, SOC 2 validation, and legal review, it may already be obsolete. AI capabilities launch weekly. Approval takes quarters.

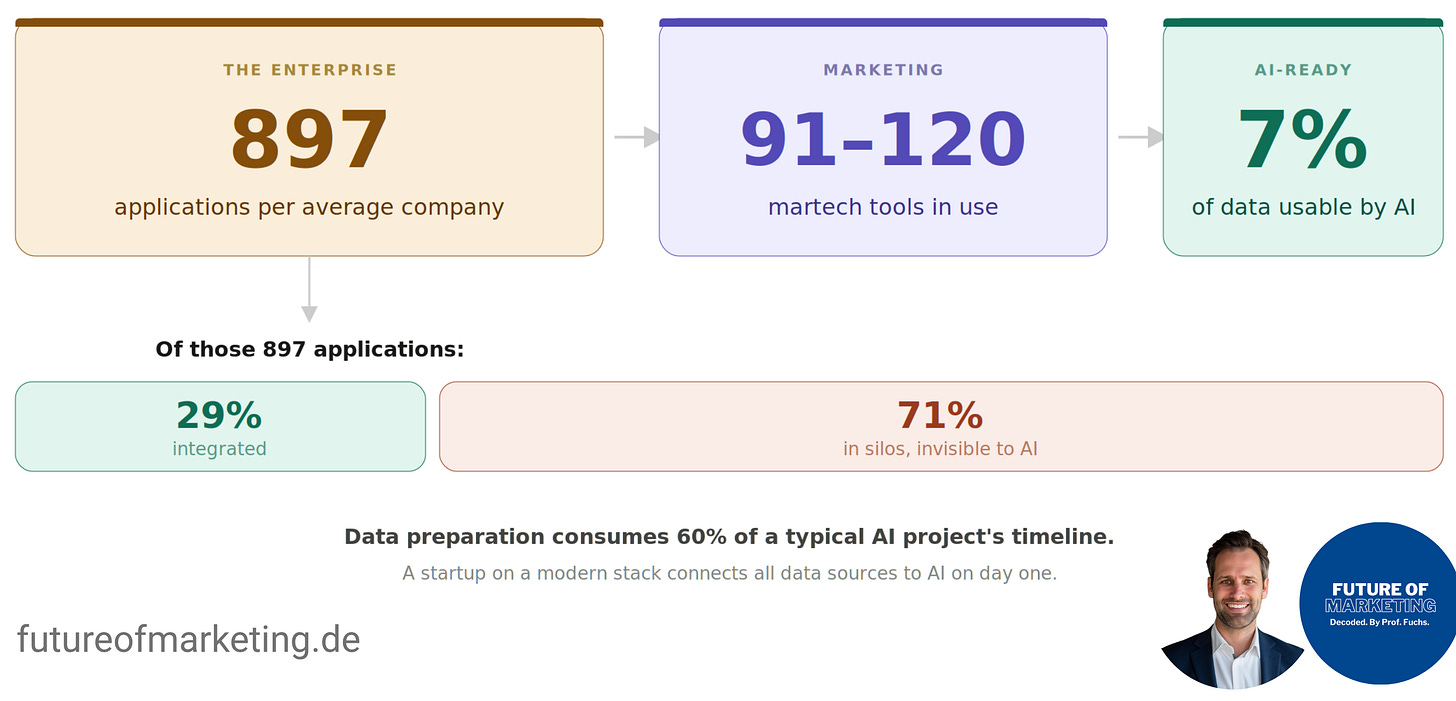

Barrier 2: The Data Architecture Trap

Even when security clears the path, enterprise marketing data is almost never in a state that AI can use.

Only 7% of enterprises consider their data “completely ready” for AI (Cloudera & Harvard Business Review, 2026). The average enterprise marketing organization runs 91-120 distinct martech tools, selected from a landscape of 15,384 commercial solutions (Brinker, 2025). And the average enterprise uses 897 applications, with only 29% integrated (MuleSoft, 2025). That means 71% of enterprise applications exist in silos, invisible to AI.

Data preparation consumes roughly 60% of a typical AI project’s timeline. And as Gartner warns: “High-quality data does not equate to AI-ready data.” AI-ready data requires lineage tracking, diversity, low-latency delivery, and discoverability across systems. Most enterprise marketing data meets none of these requirements.

Meanwhile, a startup on a modern cloud-native stack connects all data sources to AI on day one. No silos to cross, no integration projects to fund.

Barrier 3: Organizational Quicksand

The most underappreciated barriers are human, not technical.

By 2026, 20% of organizations will use AI to flatten structures, eliminating more than half of current middle management positions (Gartner, 2025). This creates rational resistance. Marketing middle managers who oversee campaign setup, A/B testing, performance reporting, and content workflows see their core functions being automated. Gartner observed an “AI Dropout Effect”: employees who perceive AI as a threat to their identity disengage, burn out, or leave.

The skills gap compounds the problem. Only 2.6% of marketers identify as AI experts (CoSchedule, 2026). And nobody owns the outcome: the CFO expects headcount reduction, the CMO wants capacity expansion, the CEO wants growth. Without unified ownership, AI pilots succeed technically but fail organizationally.

Barrier 4: Hidden Costs

Enterprise AI costs dwarf their sticker prices. Total cost of ownership runs 3-5x the advertised subscription price when accounting for integration, customization, training, and maintenance. For every $1 of tangible technology investment, companies spend up to $10 on intangible costs like process redesign, reskilling, and organizational transformation (Pereira et al., 2026). And 77% of the hardest challenges in AI deployments turn out to be these intangible costs, not the technology itself.

Barrier 5: Legacy Systems and Technical Debt

68% of IT decision-makers say legacy systems prevent their organization from fully embracing AI (Pegasystems, 2025). Technical debt constrains AI success for 81% of executives, consuming up to 29% of AI budgets (IBM, 2025). An estimated 60-80% of enterprise IT budgets go to maintaining existing systems, leaving little for innovation.

The Inevitable Result: Shadow AI

When official AI tools are restricted, slow, or disconnected from useful data, employees route around them.

78% of AI users bring their own tools to work (Microsoft, 2024).

73.8% of workplace ChatGPT usage occurs on personal accounts lacking enterprise privacy controls (LayerX, 2025). Shadow AI is highest in marketing and sales teams (UpGuard, 2024). And shadow-AI-associated data breaches cost organizations $670,000 more on average (IBM, 2025).

The organization creates the restriction.

The restriction creates the workaround.

The workaround creates the risk.

That’s the cycle.

And nowhere is this cycle more visible than in the tools enterprises have already deployed.

The Dumb Copilot Problem

The experience at Mercedes is symptomatic. The data shows it’s happening everywhere.

Microsoft 365 Copilot, priced at $30/user/month, has achieved only 3.3% penetration across Microsoft’s ~450 million commercial M365 subscribers. Its paid subscriber market share fell from 18.8% to 11.5% between July 2025 and January 2026, while ChatGPT grew to 55.2%.

Even Microsoft CEO Satya Nadella is frustrated. In December 2025, he emailed engineers stating that Copilot’s connections with services like Gmail and Outlook “for the most part don’t really work.” Perhaps most telling: approximately 75% of employees in Microsoft’s own Copilot division paid out of pocket for ChatGPT because Microsoft wouldn’t expense the rival subscription. Salesforce’s Agentforce tells a similar story: only 5,000 deals after launch, pricing changed twice within a year.

The tools have potential. But potential locked inside organizational constraints produces nothing.

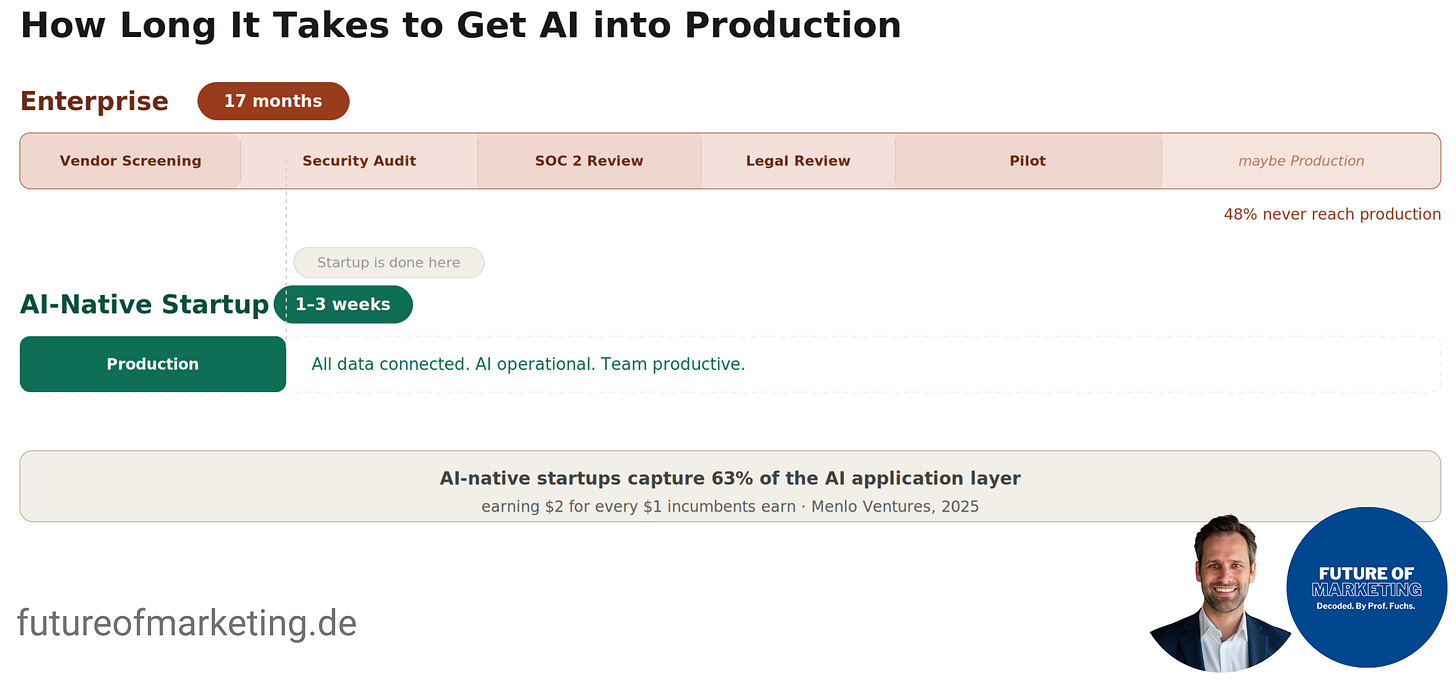

Meanwhile, Startups Deploy AI in Days

The speed differential between startups and enterprises goes well beyond incremental. We’re looking at an order of magnitude.

Enterprise AI projects average 17 months from initiation to production (McKinsey, 2025). Startups deploying focused AI use cases achieve working results in 1-3 weeks. And the market consequences are already visible: AI-native startups now capture 63% of the AI application layer, up from 36% the prior year, earning nearly $2 for every $1 incumbents earn (Menlo Ventures, 2025).

The Cursor vs. GitHub Copilot case captures the dynamic perfectly. Cursor, an AI-native code editor built by four MIT graduates with roughly 150 employees, reached $2 billion ARR by Q1 2026, competing directly with GitHub Copilot, backed by Microsoft’s $37.5 billion AI investment. The company reached $200M before hiring a single enterprise sales representative.

The efficiency numbers are staggering. AI-native startups average $3.48 million in revenue per employee, approximately 5.7x higher than the $611K average at leading traditional SaaS firms. AI companies grow 4x faster while operating with 7-8x fewer employees per dollar of revenue (Emergence Capital, 2025).

And here’s the most counterintuitive finding. OECD data from 2025 shows that for marketing and sales purposes specifically, small enterprises using AI exhibit a slightly higher utilization share than large ones. It’s the first technology adoption cycle in history where small firms don’t trail large ones in the most commercially important function.

That’s the Innovator’s Dilemma, measured in real time.

A Playbook for Marketing Leaders (What the 5% of Companies Do Differently):

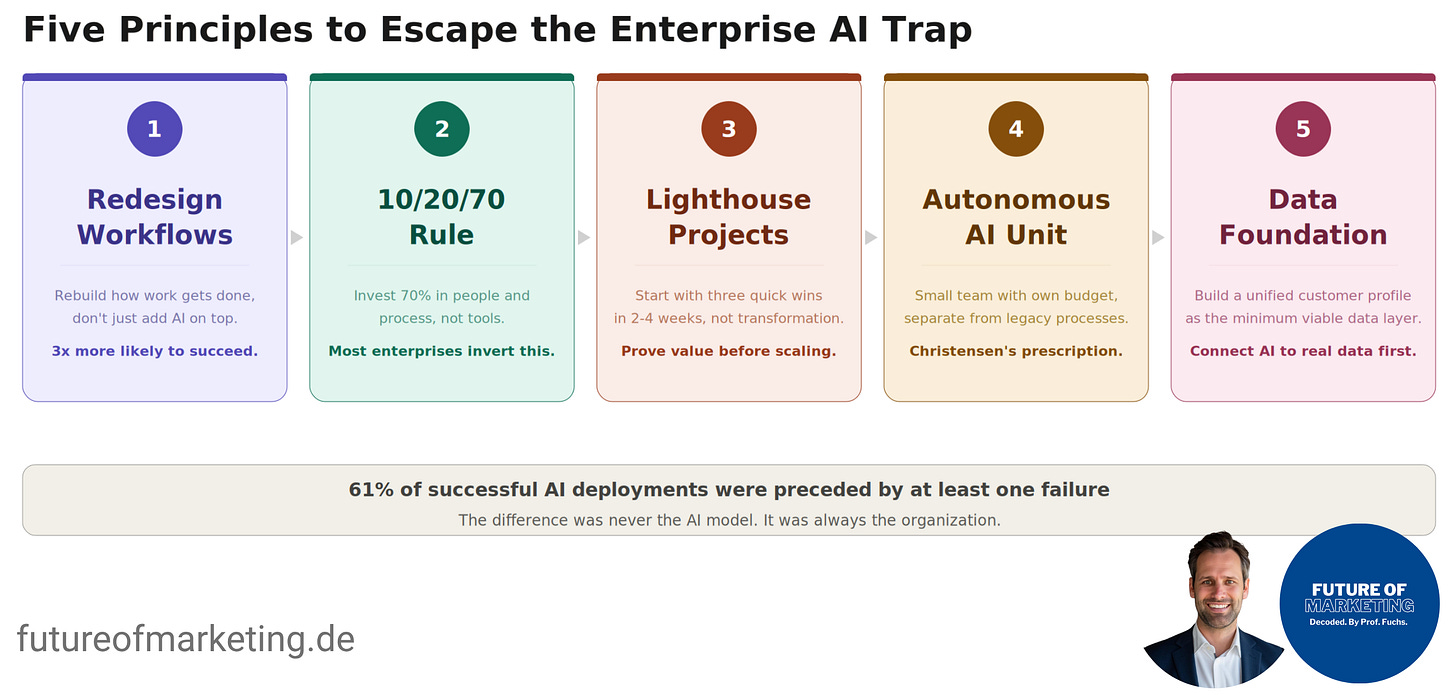

The gap between the 5% creating value and the 60% stagnating was never about the AI model. It was about the organization. And the evidence on what works is remarkably consistent across sources. Here are five guiding principles:

Principle 1: Redesign Workflows, Don’t Just Add Tools

This is the single strongest predictor of AI impact. AI high performers are nearly 3x more likely to have fundamentally redesigned individual workflows: 55% of high performers did this versus about 20% of others (McKinsey, 2025).

Studying 51 successful deployments across 41 organizations, the Stanford Enterprise AI Playbook found that “escalation-based” AI models (where AI handles 80%+ of work autonomously and humans review only exceptions) delivered 71% median productivity gains, compared to 30% for “approval-based” models requiring full human validation. The researchers’ conclusion: “Same technology, same use cases, vastly different outcomes. The difference was never the AI model. It was always the organization” (Pereira et al., 2026).

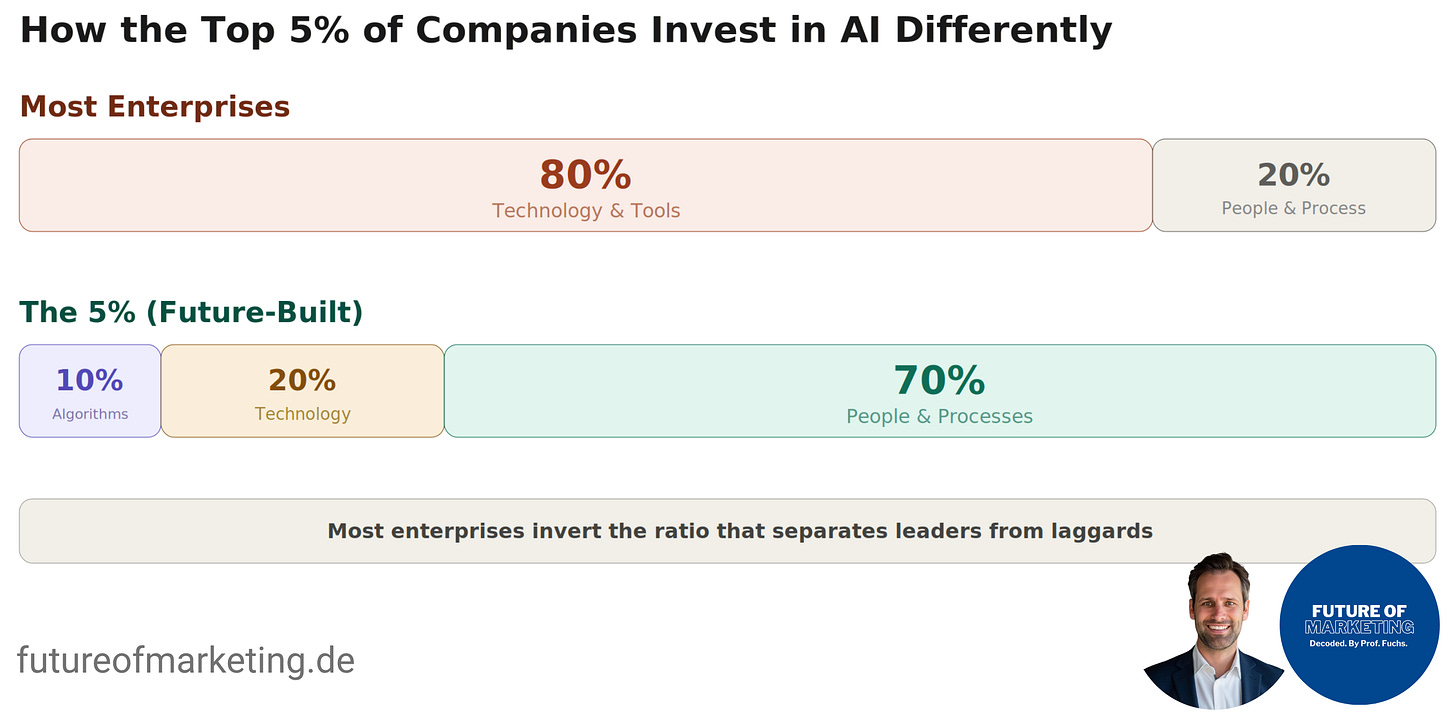

Principle 2: Apply the 10/20/70 Rule

One of the most useful frameworks for AI investment comes from BCG: the 10/20/70 allocation. 10% on algorithms and models, 20% on technology infrastructure, and 70% on people and processes. Most enterprises invert this, spending roughly 80% on platforms and tools while starving the organizational transformation that determines success (BCG, 2025).

The 70% includes redesigning decision rights, building AI literacy across the marketing team, creating feedback loops, and managing organizational change. Effective upskilling follows three stages: AI literacy (reduce fear, build confidence, takes hours), AI adoption (embed tools into core workflows, 3-6 months), and AI domain transformation (competitive advantages through domain-specific applications, 12-24 months) (McKinsey, 2025).

Principle 3: Start with Lighthouse Projects, Not Transformation Programs

The fastest path from paralysis to progress is three simultaneous quick-win projects, each deployed in 2-4 weeks. Select one velocity win (content brief generation or multi-channel repurposing), one operations win (campaign QA or automated performance narratives), and one pipeline win (lifecycle email variants or AI-powered lead scoring). Each needs clear baselines, success metrics that finance recognizes, and human review built in.

BCG’s “Freedom Within a Frame” model provides the governance architecture: IT maintains the chassis (data, infrastructure, compliance safeguards) while marketing drives the design (configuring agents to deliver outcomes). This replaces the adversarial dynamic where marketing requests tools and IT blocks them.

Principle 4: Build a Christensen-Style Autonomous AI Unit

Christensen’s (1997) own prescription maps directly to what the data shows works. He wrote : the only instances in which mainstream firms have successfully established a timely position in a disruptive technology were those where managers set up an autonomous organization with independent processes and values.

For marketing, this means a small team (5-8 people) that reports separately from mainstream operations, has its own budget authority, is measured on learning velocity and experiments run (not traditional campaign KPIs), and has permission to fail fast. The team needs a hybrid strategist-technologist as lead, marketing technologists who can build prompts and configure workflows, a data analyst connecting AI to marketing data, and dotted-line access to IT security and legal.

Principle 5: Fix the Data Foundation with a Minimum Viable Approach

You don’t need to solve the entire data architecture. You need a unified customer profile accessible across CRM, marketing automation, and analytics. Customer Data Platforms serve as the integration layer: the CDP market reached $3.28 billion in 2025 and is projected to exceed $37 billion by 2030.

Worth watching: the Model Context Protocol (MCP), introduced by Anthropic in late 2024 and now adopted by OpenAI, Google, Microsoft, and AWS. MCP standardizes how AI systems connect to enterprise data sources, with 97 million monthly SDK downloads already. Think of it as “USB-C for AI applications,” replacing the custom integration problem that makes enterprise AI so slow to deploy.

The Compounding Cost of Waiting

The most dangerous response to enterprise AI complexity is to wait for conditions to improve.

The gap between leaders and laggards is self-perpetuating: future-built companies invest more, generate better returns, and reinvest those returns into stronger capabilities. They achieve 1.7x higher revenue growth and 3.6x greater total shareholder return than laggards (BCG, 2025). That gap compounds every quarter.

Perhaps the most liberating finding for marketing leaders paralyzed by complexity: 61% of successful AI deployments were preceded by at least one failure. The researchers concluded: “The difference was never the AI model. It was always the organization. Its readiness, its processes, its leadership, its willingness to change and fail” (Pereira et al., 2026).

For CMOs, this means AI adoption can’t be delegated to IT as a technology initiative. It requires strategic leadership from the marketing function itself.

Key Takeaways

The AI Innovator’s Dilemma in marketing is already observable and quantifiable. 88% of enterprises adopt AI, but only 5-6% generate substantial value. AI-native startups now earn $2 for every $1 incumbents earn in the application layer.

The primary barrier is organizational architecture, not technology: Processes, values, and governance structures designed for a pre-AI world prevent AI from reaching its potential. Christensen diagnosed this mechanism in 1997. The data from 2025 confirms it.

BCG’s 10/20/70 rule reveals the root mismatch: Most enterprises spend 80% on tools and 20% on people. The 5% who succeed invert that ratio, dedicating 70% of resources to people and processes.

The “dumb Copilot” problem reflects a structural condition, not a product flaw: Enterprise AI tools deployed into organizations governed by pre-AI processes will remain limited, because the organization structurally prevents them from accessing the data and context they need.

Christensen’s prescription remains the most empirically validated path: Create autonomous units with independent processes and values. Start with lighthouse projects. Redesign workflows, don’t just add AI on top. The window to act is closing as the reinvestment flywheel widens the gap between leaders and laggards.

My friend at Mercedes will keep using a Copilot that can’t access what it needs. His AI-native startup competitors won’t have that problem. The question for marketing leaders is which side of that divide their organization ends up on.

Christensen already told us how the story usually ends. But he also told us what the 5% do differently. The playbook exists. The choice is organizational.

Yours,

Prof. Dr. Andreas Fuchs 🦊🎓

References

BCG. (2025). AI leaders outpace laggards in revenue growth and cost savings. bcg.com

BCG. (2025). Are you generating value from AI? The widening gap. bcg.com

BCG. (2025). Closing the AI impact gap. bcg.com

BCG. (2025). Agents accelerate the next wave of AI value creation. bcg.com

Brinker, S. (2025). 2025 Marketing Technology Landscape Supergraphic. chiefmartec.com

Christensen, C. M. (1997). The Innovator’s Dilemma: When New Technologies Cause Great Firms to Fail. Harvard Business Review Press.

Cloudera & Harvard Business Review Analytic Services. (2026). Enterprise AI readiness report. cloudera.com

Cyberhaven. (2025). Sensitive data flowing into AI tools. cyberhaven.com

Emergence Capital. (2025). What makes AI companies different. joinpavilion.com

Gartner. (2025). Hype Cycle for Artificial Intelligence. gartner.com

Gartner. (2024). 30 percent of generative AI projects will be abandoned after proof of concept. gartner.com

Gartner. (2025). AI-ready data. gartner.com

Gartner. (2025). Human readiness barriers to enterprise AI. ciodive.com

Henderson, R. M., & Clark, K. B. (1990). Architectural innovation: The reconfiguration of existing product technologies and the failure of established firms. Administrative Science Quarterly, 35(1), 9–30.

IBM. (2025). Technical debt and AI ROI. ibm.com

IBM. (2025). Cost of a data breach report. newsroom.ibm.com

LayerX. (2025). Enterprise AI and SaaS data security report. layerxsecurity.com

McKinsey. (2025). The state of AI. mckinsey.com

McKinsey. (2025). Redefine AI upskilling as a change imperative. mckinsey.com

Menlo Ventures. (2025). The state of generative AI in the enterprise. menlovc.com

MIT NANDA. (2025). 95 percent of generative AI pilots at companies failing. fortune.com

MuleSoft. (2025). Connectivity benchmark report. salesforce.com

OECD. (2025). AI adoption by small and medium-sized enterprises. oecd.org

Pegasystems. (2025). Technical debt stifling path to AI adoption. businesswire.com

Pereira, F., Graylin, A., & Brynjolfsson, E. (2026). Enterprise AI Playbook. Stanford Digital Economy Lab. digitaleconomy.stanford.edu

Sjerven, J. (2025). Copilot market adoption trends. stackmatix.com